See the Unseen

Key words: Machine Learning, Point Cloud, Architectural Heritage, Media Architecture

This dissertation presents a novel methodology for generating point clouds as 3D representation of architecture heritage that no longer exist, such as Old St Paul’s Cathedral, by using a combination of machine learning methods. The proposed approach involves generating multiple viewpoints from a single image, followed by the generation of initial point clouds. These point clouds are then processed to extract multi-scale features, which are subsequently refined by a diffusion model designed to iteratively reduce noise and correct geometric errors. The model was trained on datasets including data from a database and real-world point clouds, with results demonstrating significant improvements in geometric fidelity. However, the point cloud quality, though improved, does not yet reach the precision required for highly detailed applications, making the pipeline more suited for artistic representations and media-related uses. The outcomes of this research highlight the potential of integrating advanced machine learning techniques for heritage architecture reconstruction, particularly for those disappeared before being thoroughly documented.

Dissertation project, Bartett School of Architecture, 2024

Abstract based on this dissertation was accpeted by CDRF2025(Digital Futures), developing full content for further review

Background & Motivation

The preservation of architectural heritage is a field that has gained increasing attention over the past few years, driven by the recognition that historic buildings are not only physical structures but also repositories of cultural identity and historical memory. Unfortunately, many significant architectural works have been lost due to wars, natural disasters, urban development, and neglect. Among these is the Old St. Paul’s Cathedral in London, a Gothic marvel that was destroyed in the Great Fire of London in 1666. Similarly, the tragic fire at Notre-Dame Cathedral in Paris in 2019 is a recent reminder of the vulnerability of such cultural treasures. The fire caused extensive damage to the roof and spire, and the subsequent restoration efforts have heavily relied on digital archives, including 3D scans and detailed photographs, to guide the reconstruction process. This incident underscores the critical importance of digital documentation in the preservation and restoration of architectural heritage. The project "See the Unseen" aims to address this challenge by leveraging state-of-the-art ML techniques to reconstruct Old St. Paul’s Cathedral from limited historical data. By combining the power of pre-trained models, diffusion processes, and attention mechanisms, this project seeks to generate a point cloud 3D model of the cathedral. The digital reconstruction will not only preserve the memory of this iconic structure but also serve as a resource for historians, architects, and the public.

The History of St Paul’s Cathedral

Old St. Paul’s Cathedral, originally founded in 604 AD, was one of the most significant and iconic buildings in medieval England. It served as the seat of the Bishop of London and was a major religious center for over a millennium. The cathedral was renowned not only for its size but also for its architectural grandeur, representing the pinnacle of Gothic architecture in Britain during its time. The cathedral’s most famous feature was its towering spire, which was 489 feet, it was the tallest structure in London and one of the tallest in Europe until it was struck by lightning in 1561 and subsequently collapsed. The final blow to Old St. Paul’s came in 1666 during the Great Fire of London, a catastrophic event that destroyed much of the city. The fire, which started in a bakery on Pudding Lane, quickly spread across London, fueled by strong winds and wooden buildings. Despite efforts to save the cathedral, the fire reached St. Paul’s on the third day, and the building was engulfed in flames. The lead roof melted, causing the stone walls to collapse, and the cathedral was reduced to ruins. In the aftermath of the fire, it was decided that Old St. Paul’s could not be salvaged. Instead, a new cathedral would be built on the site, designed by the renowned architect Sir Christopher Wren. The construction of the new St. Paul’s Cathedral, completed in 1710, marked a new chapter in London’s history, but it also signaled the end of the medieval structure that had stood for over a thousand years. The new cathedral, with its distinctive dome, became an iconic symbol of the city, but the memory of Old St. Paul’s remains an important part of London’s cultural heritage.

Problem Statement

The main challenge addressed in this project is the reconstruction of architectural heritage that has been lost without proper documentation, especially in the form of 3D data, while traditional methods for reconstructing lost buildings rely heavily on historical records, such as drawings and photographs. However, these methods need tons of time and effort and often lead to incomplete or inaccurate representations due to the lack of comprehensive spatial information.

Machine learning, particularly deep learning models, offers a potential solution to this problem by enabling the generation of 3D models from limited and non-3D data sources. The challenge lies in effectively training these models to accurately reconstruct the spatial form of a building that no longer exists, based solely on historical imagery. This requires the development of novel algorithms that can infer missing details and create a faithful representation of the original structure.

The objective of "See the Unseen" is to develop a machine learning-based framework that can generate 3D reconstructions of Old St. Paul’s Cathedral, despite the absence of comprehensive 3D data. The project seeks to demonstrate that it is possible to bring lost architectural heritage back to life using cutting-edge ML techniques.

Objectives and Research Questions

The primary objective of this research is to reconstruct a visually 3D model of Old St. Paul’s Cathedral using machine learning techniques. The following specific objectives will guide the research:

Data Collection and Preprocessing: Gather historical images, drawings, and any available 3D model of builing’s similar to Old St. Paul’s Cathedral as dataset. Develop preprocessing techniques to convert these data sources into formats suitable for machine learning.

Model Development: Design and implement a machine learning model, incorporating techniques such as point cloud generation, image-to-3D model conversion, and diffusion models, to reconstruct the cathedral from the available data.

Model Training and Optimization: Train the model using the collected data, applying techniques such as data augmentation and hybrid modeling to improve the accuracy and realism of the generated 3D models.

Visualization and Potential Application: Develop possible application pipeline for the generated point cloud based on its quality and point quantity. It may not be accurate and detailed enough to be a digital twin.

The research will address the following key questions:

How can machine learning be leveraged to reconstruct 3D models of buildings that no longer exist?

What are the most effective techniques for converting 2D historical imagery into accurate 3D models?

How can the reconstructions be used despite its limitation?

Overview of Approach

The reconstruction process Old St. Paul’s Cathedral involves multiple stages that integrate advanced machine learning techniques. The core of the approach revolves around generating and refining 3D point clouds from a single image using a combination of SyncDreamer, Point-E, Point Transformer, and a diffusion model. Below is a detailed breakdown of each stage:

1.Single-View to Multi-View Image Generation (SyncDreamer):

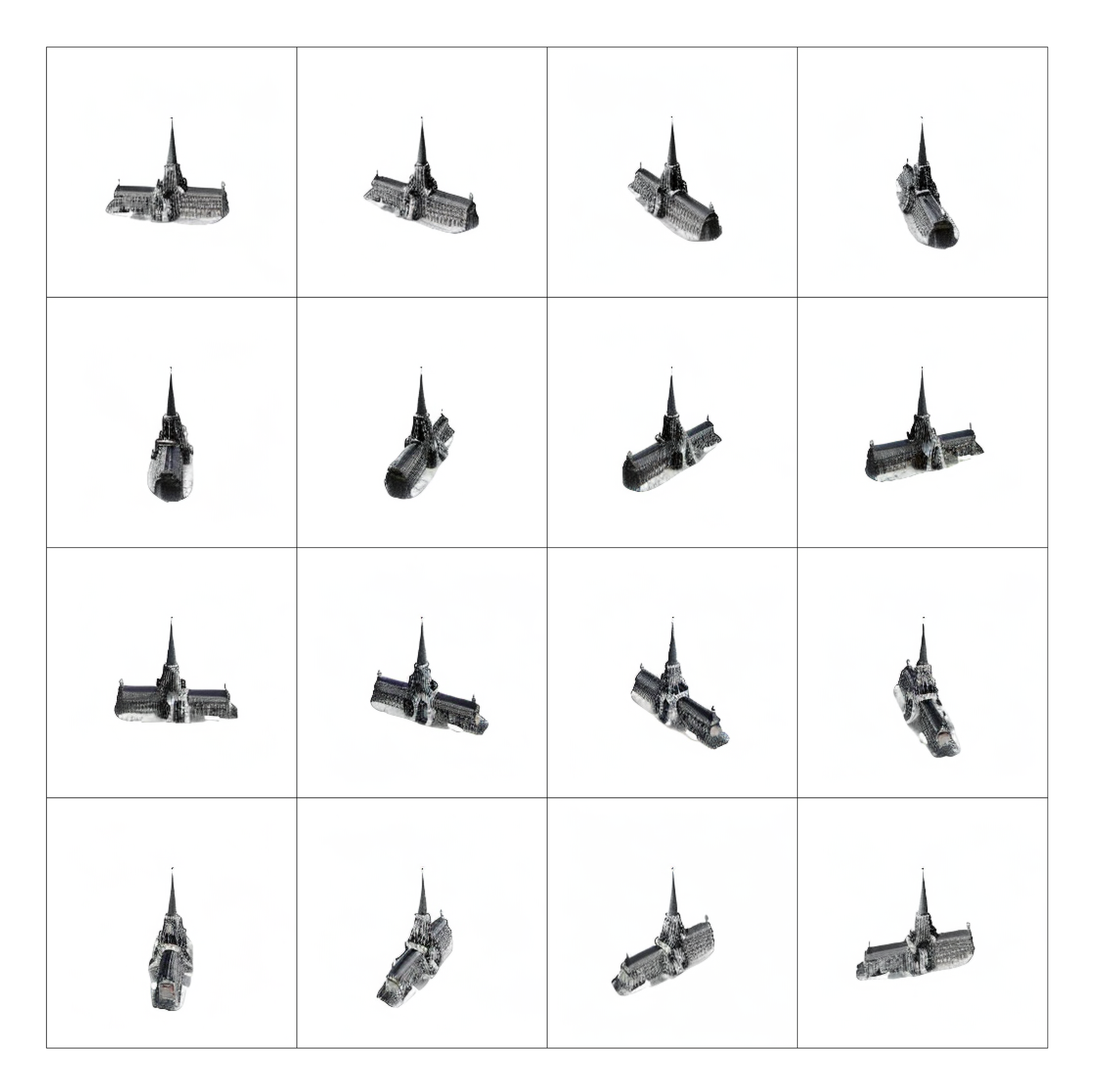

The process begins with a single historical paiting of the cathedral, as shown in Figure below. SyncDreamer, a generative model, is employed to create multiple views of the building by generating images from 16 different angles, as shown in Figure below. SyncDreamer uses a shared latent space to ensure the generated views are consistent with the input image. The output is a set of images capturing the cathedral from various perspectives.

2.Initial Point Cloud Generation (Point-E):

Each image generated by SyncDreamer is fed into Point-E, a pre-trained model designed for generating 3D point clouds from 2D images. Point-E’s transformer-based architecture uses self-attention mechanisms to predict the spatial distribution of points, forming an initial 3D representation. These initial point clouds are coarse but provide a rough geometry of the structure.

3.Feature Extraction (Point Transformer):

The initial point clouds generated by Point-E are processed using Point Transformer, a model designed to extract features from 3D point clouds. Point Transformer applies a multi-head self-attention mechanism to capture both local and global geometric patterns. The output is a feature-rich representation of the point clouds, essential for refining the structure in subsequent stages.

4.Denoising (Diffusion Model):

The extracted features are then processed through a diffusion model. The diffusion model first adds Gaussian noise to the point clouds to simulate imperfections, then learns to denoise them iteratively, producing a refined and accurate point cloud. The diffusion process is guided by the features extracted by Point Transformer, which is the context of each point in diffusion process, ensuring the consistence of shape and the accuracy of reconstruction.

5,Training Process:

This step involves training the transformer feature extractor and diffusion model using a combination of 2 datasets. The model is trained to extract the features from the input point cloud and denoise to ensure that the reconstruction is as close as possible to the reality.

This approach effectively leverages the strengths of each model, SyncDreamer for view synthesis, Point-E for initial geometry, Point Transformer for feature extraction, and the diffusion model for refinement—resulting.

Environment Setup

The training was conducted on Google Colab using an NVIDIA A100 GPU.

Platform: Google Colab

GPU: NVIDIA A100 Tensor Core GPU(40G)

CUDA Version: 11.7 (cu117)

Python Version: 3.10

Several libraries were essential for the implementation, training, and evaluation of the models. The primary libraries and their specific versions are listed below:

PyTorch: torch==2.0.1+cu117, Open3D, Open3D-ML, Kaolin, pyemd, h5py

Output from the Model

After the Training process, the model now is able to extract the features of different dimensions from the initial point clouds generated by Point E and concatenate the features as context embedding with time step as the input of Diffusion model to refine. Below Figure shows the output from the model, while the input is noisy and inaccurate point cloud generated from Point E. As indicated in the Figure below, the output point clouds still contains some inaccurate information. Certain artifacts and inaccuracies persist, especially in regions where the input data was particularly noisy or incomplete. These issues are primarily due to the fact that the input point clouds during inference contained features that the model had not encountered during training. As a result, the model struggled to generalize effectively, leading to less robust outputs. The model’s reliance on the features learned from the training dataset, while generally beneficial, also introduced some unwanted characteristics into the output point clouds. For instance, the model occasionally imposed structural patterns learned from the training data onto the new data, even when those patterns were not present in the original object or scene. This phenomenon, known as overfitting, indicates that the model has memorized specific features from the training data rather than learning a more generalizable representation. Consequently, some of these erroneous features appeared in the final output, detracting from the overall quality and accuracy of the reconstructed point clouds.

Visualization

Given that the quality and density of the generated point clouds do not yet reach the level required for highly detailed, real-world applications, the current pipeline is more suited for media-related purposes and artistic representations of architectural heritage. This methodology can be particularly effective for creating stylized, abstract, or artistic renditions of historical buildings, where the emphasis is on visual aesthetics rather than precise, metrically accurate reconstructions. This application has significant potential in fields like virtual tourism, digital art, and cultural heritage presentations, where the goal is to evoke the essence or atmosphere of a site rather than to replicate it with exact precision. For example, the generated point clouds could be used in AR or VR environments to provide immersive, visually engaging experiences that highlight the historical significance or artistic value of a structure, even if the finer details are not fully captured.

To provide an interactive experience that highlights the differences between the input and output point clouds, an AR showcase has been developed. Users can scan the provided QR code to access the AR environment through an Apple device, such as iPhone or iPad. This platform allows for real-time exploration of the point clouds, enabling users to compare the original noisy or incomplete data with the refined outputs produced by the model.

Conclusion

This dissertation has presented a novel approach to refining 3D point clouds using a combination of a Point Transformer for feature extraction and a diffusion model for noise reduction and structural enhancement. The results demonstrate that the proposed methodology can effectively improve the quality of point clouds, making it suitable for applications in media, digital art, and cultural heritage preservation.

While the model shows strong potential, particularly in digital heritage architecture contexts, several challenges remain. Addressing these limitations through future research will be key to realizing the full potential of the methodology for more precise and accurate 3D reconstructions. Ultimately, the integration of advanced machine learning techniques with creative applications offers exciting possibilities for the future of digital modeling and cultural heritage preservation, to discover the heritage architecture lost in time, to rebuild ancient living environment, and to see the unseen.